2026年4月13日出版的《自然—方法学》杂志发表了加州大学吉娜课题组的最新成果,他们的研究开发出了基于神经场的活体双光子荧光显微镜自适应光学校正。

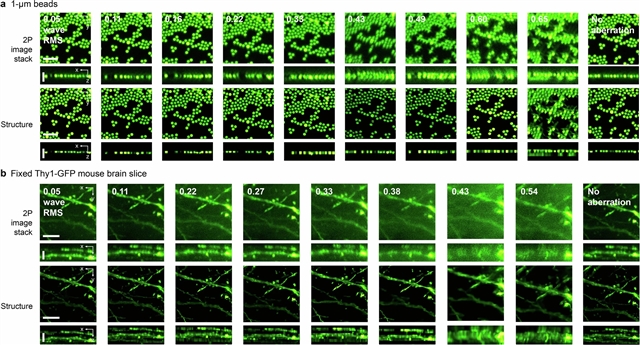

在这里,该研究团队描述了NeAT,一个计算框架主题神经领域的自适应光学双光子荧光显微镜。NeAT估计波前像差并从3D图像堆栈中恢复样本结构,而不需要外部数据集进行训练。结合运动校正在学习和纠正常见的商业显微镜共轭误差,NeAT是专为部署在生物实验室的体内成像。研究团队在不同的信噪比、像差和运动条件下,用带有波前传感器的自制显微镜验证了NeAT的性能。在商业显微镜下,小组展示了实时畸变校正在活体无母脑的体内形态和功能成像,与NeAT改善突触和神经元的谷氨酸和钙成像的信号和准确性。

据悉,自适应光学通过测量和校正光学像差来恢复复杂样品的理想成像性能,但通常需要带有精心对准的波前传感/整形装置的自制显微镜,并且容易受到样品运动的影响。

附:英文原文

Title: Adaptive optical correction for in vivo two-photon fluorescence microscopy with neural fields

Author: Kang, Iksung, Kim, Hyeonggeon, Natan, Ryan, Zhang, Qinrong, Yu, Stella X., Ji, Na

Issue&Volume: 2026-04-13

Abstract: Adaptive optics restore ideal imaging performance in complex samples by measuring and correcting optical aberrations but often require custom-built microscopes with carefully aligned wavefront sensing/shaping devices and can be susceptible to sample motion. Here we describe NeAT, a computational framework using neural fields for adaptive optics two-photon fluorescence microscopy. NeAT estimates wavefront aberration and recovers sample structure from a 3D image stack without requiring external datasets for training. Incorporating motion correction in learning and correcting conjugation errors commonly found in commercial microscopes, NeAT is designed for deployment in biological laboratories for in vivo imaging. We validate NeAT’s performance using a custom-built microscope with a wavefront sensor under varying signal-to-noise ratios, aberration and motion conditions. With a commercial microscope, we demonstrate real-time aberration correction for in vivo morphological and functional imaging in the living mouse brain, with NeAT improving the signal and accuracy of glutamate and calcium imaging of synapses and neurons.

DOI: 10.1038/s41592-026-03053-6

Source: https://www.nature.com/articles/s41592-026-03053-6

Nature Methods:《自然—方法学》,创刊于2004年。隶属于施普林格·自然出版集团,最新IF:47.99

官方网址:https://www.nature.com/nmeth/

投稿链接:https://mts-nmeth.nature.com/cgi-bin/main.plex